The vendor signed the BAA. The model runs in a SOC 2 environment. The data is encrypted at rest. And a billing clerk still pasted a patient's complete encounter history into the prompt box this morning.

Governance Conversations Start in the Wrong Place

Most AI governance conversations in healthcare start with the vendor. Is the model HIPAA compliant? Will the vendor sign a BAA? Where is the data stored?

Those are fair questions. They are just not the first questions.

The first question is what happens before the vendor ever sees anything. In the prompt itself. In the path between the employee and the system. In whether anyone has classified the data, enforced policy, or recorded the decision before the request leaves the organization.

It is not really a failure of security leadership so much as a failure in how the conversation is usually structured. Vendor review feels familiar. Procurement has a process. Legal has a process. Security questionnaires have a process. So organizations start where they have muscle memory.

But a signed BAA does not classify the prompt for you.

Three Controls, in Order

If you want a better mental model for healthcare AI governance, it helps to think in three controls, in order.

Control 1: What Is This Data, Really?

Before you can decide where data may go, you have to know what it is. Not in a generic sense. Not "this is a chat message." In a meaningful sense. This prompt contains a medical record number, a diagnosis, a provider name, and enough context to constitute protected health information.

And meaningful classification means understanding that in a clinical note linked to an identifiable patient, everything is PHI. The diagnoses, the medications, the lab values. Not just the identifiers in the header. Remove the identifiers, and the clinical content is no longer PHI. Leave them in, and the entire document is protected. Most employees do not understand this distinction, and most classification systems do not enforce it.

Asking employees to self-classify every prompt correctly, every time, in live workflows, is not a control strategy. It is a hope strategy.

Control 2: Is This Person Authorized to Use This Data This Way?

A person may be fully authorized to view information in the EHR and still not be authorized to send that same information to an external AI service for a given purpose. AI creates a new decision point that older authorization models do not handle well.

This is where organizations discover that permission to access is not the same as permission to transmit.

Control 3: Where Is It Safe to Send This?

Now the vendor question matters. But now it appears in the right place in the sequence. And it is not a static answer. The same data might be safe for one provider that has a BAA and keeps data in region, but not for another that runs a public API with no compliance agreement. That routing decision should be automatic, based on what classification found, not left to the employee.

Once the organization knows what the data is and whether this use is authorized, it can make a real decision about destination. Public model, enterprise model, internal model, blocked entirely. The destination depends on the data. Without those first two controls, the vendor review is incomplete by definition.

The Walled Garden Problem

Most vendors selling "HIPAA-compliant AI" are selling a walled garden. Their tool is compliant. Use it and you are covered. But the moment an employee opens a different AI tool, the compliance story collapses. ChatGPT, Copilot, Gemini, whatever launches next quarter. Your employees are not using one AI tool. They are using whichever one answers their question fastest.

Shadow AI is not an edge case. It is the default behavior. And a walled garden does not help when people are climbing over the wall every day.

This is the architectural blind spot: enforcement that lives inside one AI tool only enforces on interactions with that tool.

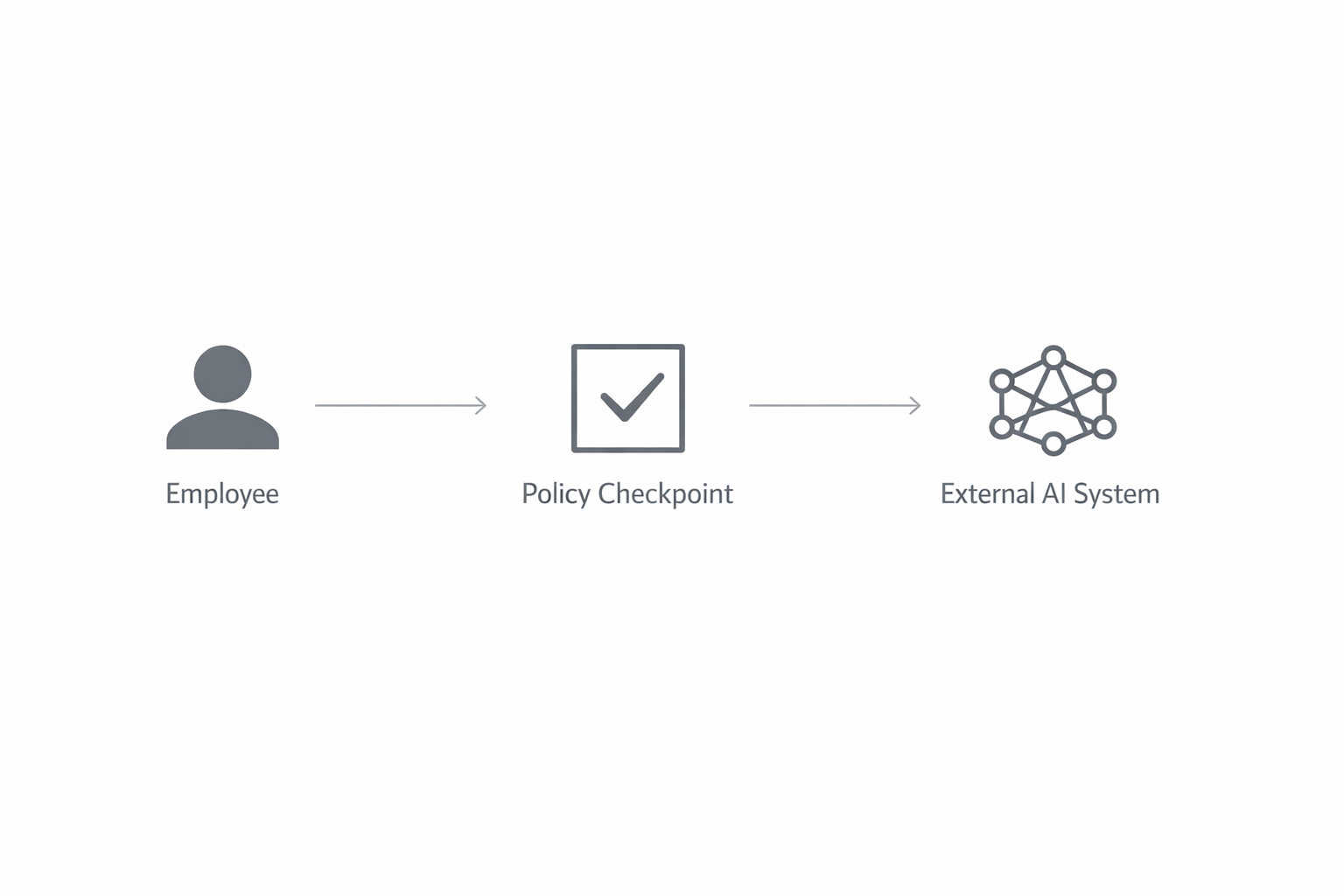

The architectural answer is an enforcement layer that is independent of any single AI vendor. Something that sits between users and all AI models, classifying data and enforcing policy regardless of where the request is headed. This is what the industry is beginning to call an AI control plane. It is a fundamentally different architecture than a compliant chatbot.

The Order Matters

The order of the three controls matters because every shortcut creates a different failure mode.

- Skip classification, and you rely on employees to spot every risky prompt themselves. Your enforcement layer cannot route what it cannot classify.

- Skip authorization, and anyone with AI access can send almost anything anywhere. That is not governance. That is a flat network.

- Focus only on the vendor, and you may end up with a technically approved destination receiving data that never should have left the environment in the first place. And you have no visibility into the same data flowing into the three other AI tools your employees use that you do not have agreements with.

The control plane pattern works because it enforces at every level. Classification determines what the data is. Authorization determines whether this use is permitted. Routing determines which provider, if any, should receive it. The three controls compose into a single enforcement decision that applies to every AI interaction, regardless of the destination.

Better Questions for Leadership

This is also where the leadership conversation needs to change.

Instead of asking whether the organization uses "HIPAA-compliant" AI tools, leadership should ask whether unauthorized data can reach any AI system, including the ones they did not approve.

Instead of asking whether employees have been trained on AI policy, leadership should ask what percentage of staff can actually identify PHI in realistic AI interactions and whether that has been measured.

Instead of asking whether an AI policy exists, leadership should ask whether there is an enforcement layer between employees and external AI that applies policy automatically, even when they are not using the approved tool.

Those are harder questions. They are also much more useful. They shift the conversation from box-checking to proof. And the last one is the most important, because enforcement that depends on employee cooperation is not enforcement. It is a suggestion.

This Isn't Just Healthcare

One note for readers outside healthcare who have followed this series: the three-control framework described here is not HIPAA-specific. Replace "PHI" with "classified information" and you have the same problem in government. Replace it with "material non-public information" and you have the same problem in finance. Replace it with "privileged communications" and you have the same problem in legal.

The pattern is identical: classify the data, evaluate authorization, route to compliant destinations. The detection rules and routing policies change by industry. The architecture does not. Any organization where sensitive data might flow into an AI system, which increasingly means every organization, needs to think about enforcement at this layer.

From Intent to Evidence

For most organizations, the smartest place to start is not with another procurement workflow. It is with measurement. Understand the gap first. Find out how much of your AI usage is even visible to your compliance team. Then decide what kind of control environment needs to exist between the employee and the prompt.

We built our assessment around exactly this idea. It gives organizations a way to evaluate real readiness before they try to solve the wrong problem in the wrong order.

Take the AI Readiness Assessment →

This is the final post in a three-part series. The first covers the shadow AI patterns already happening in most healthcare organizations. The second covers the four questions most organizations cannot answer about their AI readiness.

About Three Gates

Three Gates is an AI control plane for regulated industries. Before any AI model sees your data, Three Gates classifies what's sensitive, checks whether the request is authorized, and routes it to a compliant destination. The architecture is vendor-independent and deployment-agnostic. Healthcare is the first vertical; government, legal, and financial verticals are on the roadmap. The goal is not to restrict AI usage, but to make it possible to say yes to AI safely.